Nowadays, every initiative, project, and activity can be measured and tracked. However, when it comes to people programs like mentoring, it can get hard to get the data to reflect what’s really going on. It’s why we always get the question, “How do you actually measure a mentoring program?” In this article, we discuss all things mentoring program measurement—why it’s important, how to do it, key metrics to use, and best practices.

The Importance of Measuring Mentoring Programs

So, why should you measure something as seemingly intangible as mentorship? The answer lies in the old adage, “What gets measured gets managed.” When a program coordinator knows how to measure a mentoring program’s health, organizations can enhance, adapt, and optimize their programs for better outcomes. So let’s briefly discuss the benefits—why should you measure your mentoring program’s success?

Identify blind spots

Measuring your mentoring program helps identify strengths and weaknesses within your mentorship initiatives, as well as what’s lacking and what’s unnecessary. This, in turn, promotes accountability among mentors and mentees alike, fostering an environment of continuous improvement. Additional feedback loops can also be established following these discoveries, allowing participants to voice their experiences and suggestions, which can further refine the program over time.

Refine alignment with organizational goals

Evaluating mentoring programs can also help organizations better align their mentoring objectives with broader organizational goals. This alignment ensures that the mentoring program is not just another tick in the box but a strategically sound investment in your workforce.

Enhance participant satisfaction

When your participants know that the program is always being measured to ensure its constant improvement, as mentors and mentees, they feel their experiences are being acknowledged and valued. This increased satisfaction also increases their level of engagement in your program.

Identify best practices

Measurement also allows you to uncover what works for your program and your organization. By shedding light on what works well, organizations can replicate successful methods across the board, maximizing the return on their mentoring investments.

Celebrate your wins and build credibility

Celebrating your program’s milestones and achievements reinforces the positive impact of mentorship and motivates participants to stay committed to their development journeys. Publicly recognizing progress not only boosts morale but also builds credibility for your program and for you as the program coordinator by demonstrating that the mentoring initiative is delivering tangible results. This sense of recognition can be a powerful driver for both mentors and mentees, cultivating a culture of appreciation and growth within the organization. It also helps position yourself as a driving force in fostering engagement within your organization, as well as a champion for the wellness and growth of your people.

Secure program longevity

One the biggest reasons to measure your mentoring program is so you can secure its future. Being able to report on the tangible benefits of the program to your people and your organization through both hard numbers and impact statements means leadership, stakeholders, or sponsors will be more than happy to continue investing in your program, and maybe even consider expanding it!

The Art and Science of Measuring Mentoring Programs, with Mentorloop

A healthy mix of qualitative and quantitative data.

The Art: Qualitative Data

These are surfaced throughout your program’s lifecycle and give you a deeper insight into the journey that different mentoring pairs in your program go on, what they have learned and how they’ll tackle their next goals.

This is an active reflection on every participant’s matching experience is encouraged via the platform, to ensure everybody is pleased with their match and provides an opportunity to find another match should the pairing not work out. This feedback is shared with the Program Coordinator as well as fed back into the matching algorithm to improve future matches.

Pulse Reports

These check-ins are automatically executed at key intervals throughout the program to understand how participants feel and help you understand things like:

- Whether mentoring should continue at your organization

- The likelihood of recommending the program to others to repeat participation

- How participants would rate their opportunities in various areas such as learning, growth, cross-team collaboration, skill development or goals

- Exploring other program-specific success indicators

The Science: Quantitative Data

Quality at Scale

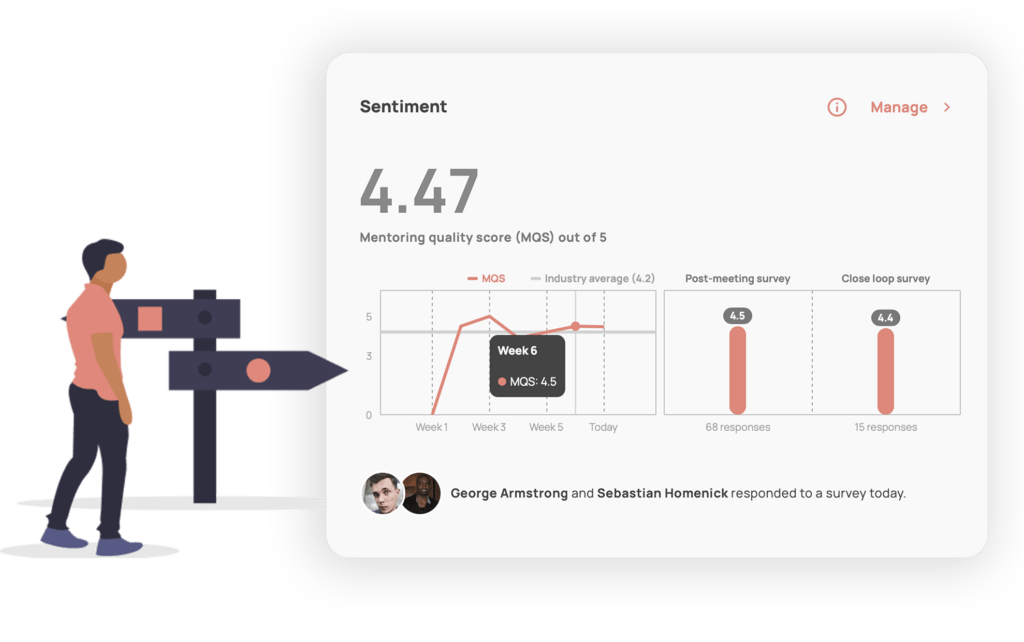

Tracking your MQS (Mentorloop Quality Score); weights all of the inputs and generates an average. Measures the satisfaction and quality of mentoring both at the individual level and aggregated at a program level.

Milestone Data

Who is at what stage? This is important for when you have asynchronous or ‘always-on’ mentoring at play where participants may have started their mentoring journey at different times. You can use this data to group participants and segment your communication, for example; you may bulk message all of your mentees who have completed their Milestones and suggest they give being a mentor a go in your next cohort.

Goals and Tasks

Track their progress towards these tasks. No need to check in regularly, you can access this via the goals tab at any time. These goals can be professional, such as getting a promotion, or personal, such as developing a new skill or building a personal advisory board.

Refer a Friend Suggestions

This is an indicator of the virality of your program and also gives you a sense of how much participants value their participation.

Always On: Live Data

If you’re running a program without a mentoring platform, please, PLEASE, don’t wait until the end to evaluate it. Programs that evaluate their program health at the end of a program have waited until it’s too late.

Bringing this data together assists in building your organisational mentoring narrative – evaluating in real-time.

We’re no stranger to communications platforms; whether you’re a fan of Microsoft Teams, Zoom, Whatsapp, text, phone calls or a good old fashioned coffee – all, are equally great ways of communicating. Check out our suite of apps and integrations to weave this in seamlessly with your organisation’s communications ecosystem.

The best mentoring happens where you’re currently operating, communicating and where people feel most comfortable. So, as controversial as it sounds, the best mentoring sometimes happens outside of the Mentorloop platform, so it makes sense to ask the question:

How do you know if in-person mentoring is going well?

Put simply, we just ask.

Evaluating mentoring programs can often be an afterthought, or pushed to the end of a mentoring program – 6, 12 or even 18 months into the program, when it’s too late to course correct or make vital changes that could have taken your program on a completely different path.

By asking participants a simple question after key moments (like post-meeting) and periodically throughout their mentoring lifetime, Mentorloop aggregates these responses to make it easy to know how, and when, to intervene by surfacing program sentiment data.

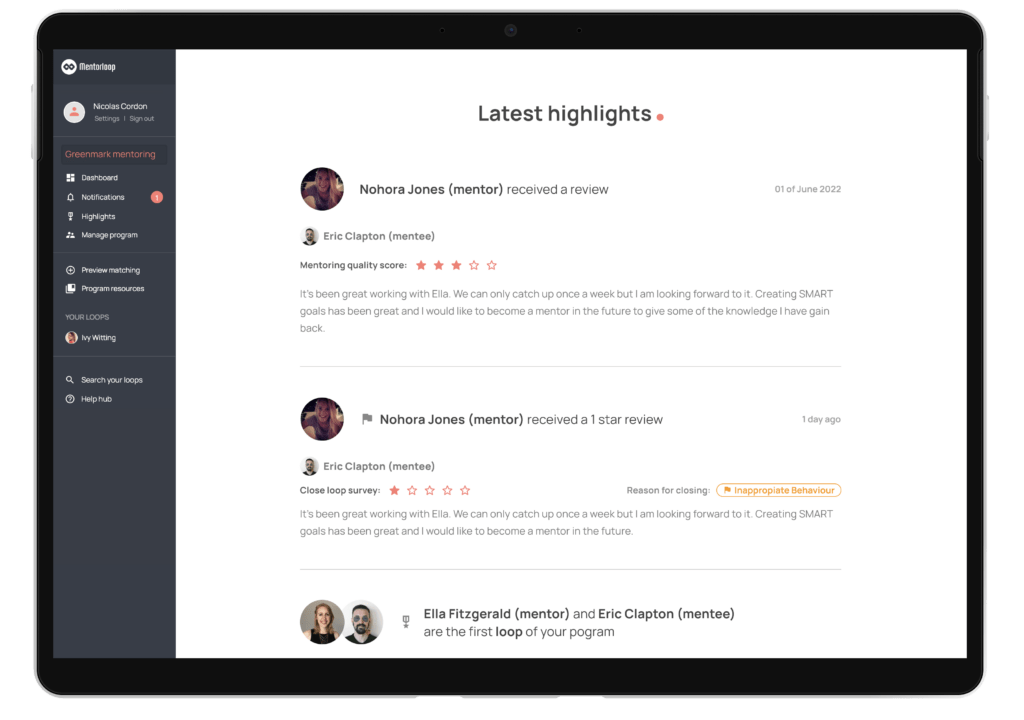

At the same time, participants have the opportunity to add private feedback on how the relationship is faring. This is also collected to build mentoring stories – making it easier than ever to build a narrative around the impact that your mentoring program has and surface Mentoring Champions who can help you promote the program in subsequent recruitment drives.

Mentoring Program Health: 5 Key Metrics to Measure

At Mentorloop, we have 5 main areas of program health that we encourage program coordinators to keep an eye on:

- Program Growth

- Matching

- Participant Growth

- Program Health

- Contextual Feedback

Let’s talk about each in a bit more depth.

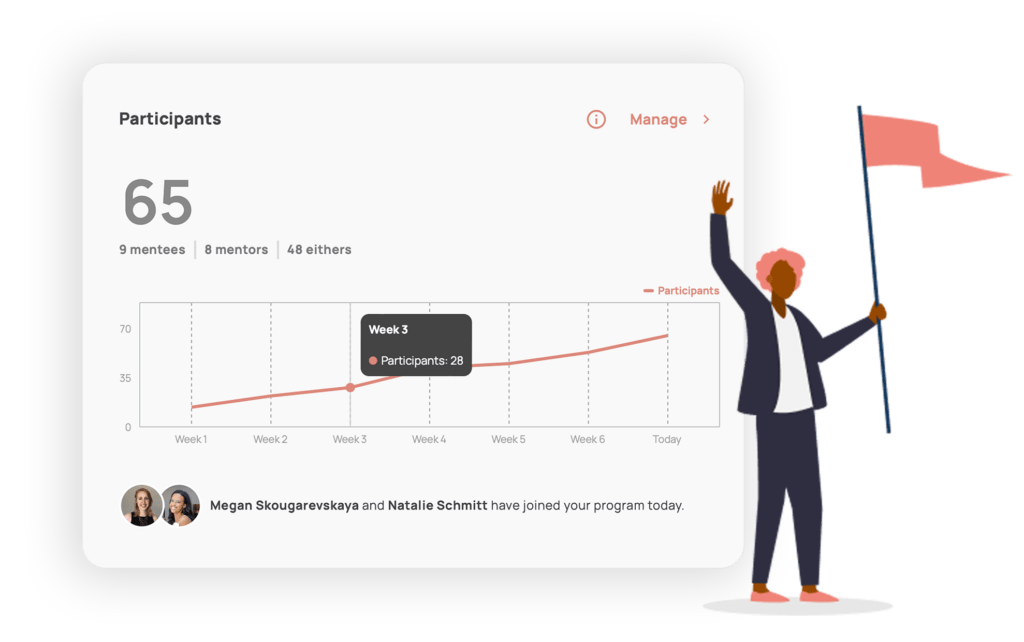

Program Growth

One way to know if your program is achieving success is by tracking how much it’s growing. It’s also a good way to know if your marketing and recruitment efforts are successful.

If you’re running an Always-On program, have a look at whether the number of participants is increasing, decreasing, or remaining steady. For those running On-Off programs or recruiting in Cohorts, simply comparing the previous sign-up round’s sign-ups with the current one is the way to go.

On Mentorloop, the Participants chart on your Program Dashboard tells you exactly how your program is developing over time and if you’re meeting your recruitment targets. Your Insights will also tell you how you’re tracking towards your recruitment target or how many new participants have signed up over a period of time.

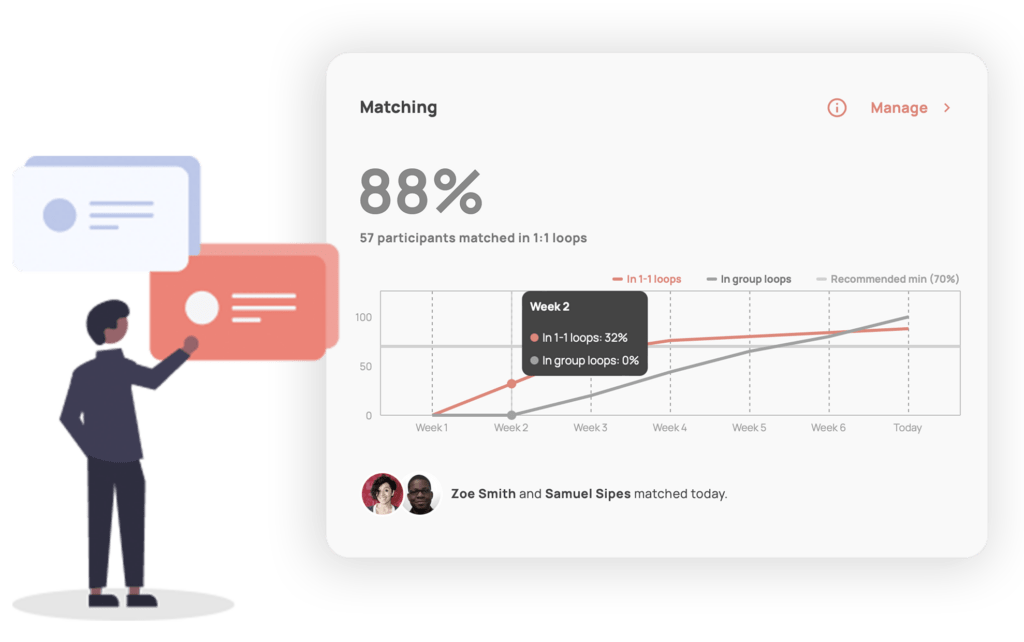

Match Rate

Tracking how much of your program’s cohort is matched with a mentoring partner is a great indicator of success. After all, the whole point of a mentoring program is to create mentoring relationships. Make sure you’re keeping an eye on those who are yet to find a match in your program and try to keep that number down.

Mentorloop’s Matching chart on the Program Dashboard keeps you on top of this. It tells you how many connections you’re making and helps you track whether you’ve got stragglers and people who still need to be matched. Matching Insights will also tell you if you have draft matches waiting to be approved or if your participants have matched themselves recently.

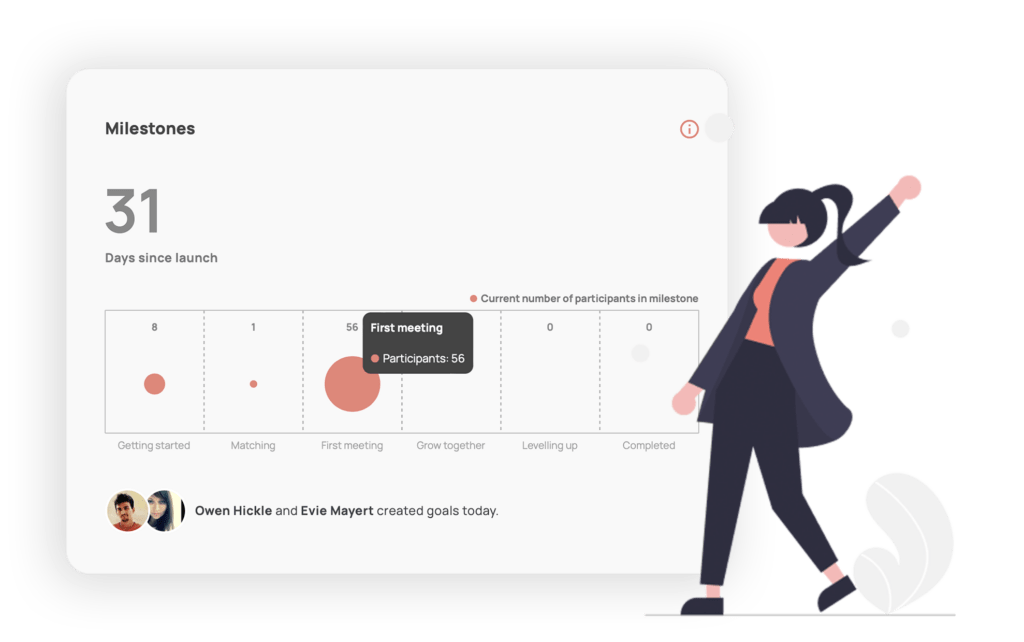

Participant Growth

No matter how big and engaged a mentoring program is, it’s all for naught if the participants aren’t growing. So this is obviously vital. Make sure you’re keeping an eye on how your mentors and mentees are tracking towards their goals. You can do this manually through surveys, but mentoring software makes this tricky task way easier.

On Mentorloop, we use Milestones to tell you who is at what stage of their mentoring journey and highlight the progress your participants are making. You can also use the Goals feature to see what kind of goals your participants are setting and the progress they’re making towards these goals.

Program Health & Sentiment

The success of a mentoring program doesn’t just rely on its growth but also on the program’s health and therefore the health of the relationships within it. Are your participants having a good experience with their mentoring partners? Are their meetings going well? To track this, it’s best to simply ask. Obviously, this is tedious and time-consuming to do one-to-one, so mentoring software is the way to go here.

On Mentorloop, Sentiment gives you real-time feedback and analysis of your program’s health. This helps keep you on top of the ratings participants are giving their meetings and general mentoring experience. It gives you an easy-to-understand chart with a quantitative representation of program health, tells you how your program’s health is trending over time, and allows you to dive into the context should you want to.

Contextual Feedback

We always say that the human aspect of mentoring should never be taken away from measuring your mentoring program. It’s why we never measure mentoring programs via the hours participants spend in mentoring meetings or other vanity metrics. However, we admit that it can be difficult to measure this. Manually, you can send out surveys or arrange feedback sessions, of course, but the easiest way to do this is to enlist the help of mentoring software.

At Mentorloop, we don’t believe in reducing people to just numbers because we believe that mentoring, at its core, is all about human connection. So we make sure that you get to keep a finger on the pulse of your program through Highlights. Highlights gives you the latest activity in your program – be it new people joining or contextual feedback from your mentors and mentees.

Learn more about Mentorloop’s analytics and reporting capabilities:

Measuring Mentoring Program Impact

When it comes to measurement, many program coordinators stop at tracking program health — things like growth, match rates, or participant satisfaction. While these are important, they don’t tell the full story. To demonstrate the true value of mentoring, you also need to measure program impact: the outcomes mentoring has on people’s careers, confidence, skills, and sense of belonging.

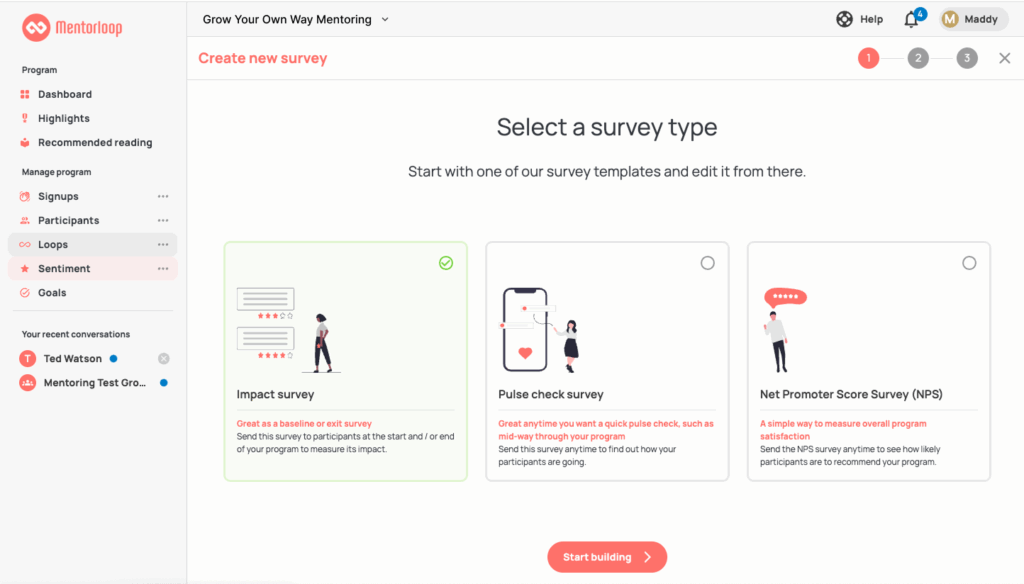

This is where Mentorloop Surveys come in as one of the most effective tools. When designed thoughtfully, they capture both quantitative and qualitative feedback, helping you understand not just what participants did, but how mentoring influenced their personal and professional development.

Some best practices include:

-

Use pulse check surveys during the program for quick, real-time check-ins (Are people engaged? Do they feel supported?)

-

Add impact-focused surveys at key milestones (e.g., after 3–6 months or at program completion) to surface longer-term outcomes

-

Mix scaled questions with open text to balance measurable data with participant stories

-

Keep it simple. The best surveys are short, clear, and relevant to the participant’s journe

By layering program health metrics (e.g., match quality, sentiment, growth) with program impact measures (e.g., skill development, promotions, increased confidence, stronger networks), you gain a holistic view of success. Health data helps you improve the experience in the moment; impact data builds the case for long-term investment and demonstrates how mentoring contributes to organizational goals.

In short: Your health metrics tell you how well the program is running. Impact measurement tells you why it matters.

Together, they allow you to tell a complete story that resonates with participants, stakeholders, and leadership alike.

What NOT to Measure

Just as we hope you don’t measure your personal relationships by the hours you spend together, we also don’t believe in measuring mentoring relationships this way.

Just as ‘quality time’ exists for personal and familial relationships, it is also an important sentiment for professional relationships like mentoring and coaching.

It’s why seeing a therapist can sometimes feel transformative, while other times it may feel like a rip off. Measuring the impact of our relationships in ‘hours’ is never really accurate.

Human relationships are difficult to measure. When it comes to mentoring programs this flaw is compounded – the business metrics take a long time to track and the impact gets lost along the way if a measure of quality is absent.

So why do other mentoring platforms measure mentoring by number of sessions, or worse, number of hours?

As Harvard Business Review says, positive professional relationships have three traits in common:

1. The individual understands what the relevance of their relationship is;

2. They understand whether, and why, they are transactional or transformational;

3. And they are committed to maintaining the relationship even when they are in conflict.

Thinking about these three traits can help you assess your key relationships and identify opportunities to engage and connect in ways that deliver results when needed. If you’ve realised that some of your professional relationships need work, identify the key relationships that have the most influence on your success, and conduct a quick audit of each of the three traits.

A lot of time and energy is invested in developing and maintaining an organisational mentoring program and organisations want to know that those efforts are well spent. As they rightfully should be! They should be seeking these answers from both those who run mentoring programs, and hold the expectation that the tools that assist running their programs, surface this information.

You’ll notice that other mentoring platforms often measure and focus solely on ‘vanity metrics’ cloaked as ‘engagement activities’. These may include recording and reporting on the number of messages and meetings, along with:

- Number of sign ups

- Number of relationships

- Number of mentoring sessions

- Number of hours mentoring

- Number of goals set

These numbers, on their own, fail to surface the quality and sentiment of your program. They merely describe whether your program is increasing or decreasing in size and very loosely if something is happening.

Reporting On Your Success

Gathering all these data points together and presented in a way that’s easy to understand can be a tedious task. However, it’s a necessary one if you want to ensure that everyone sees value in your program and ensure its future. This is why we’ve made this task easy for you on Mentorloop.

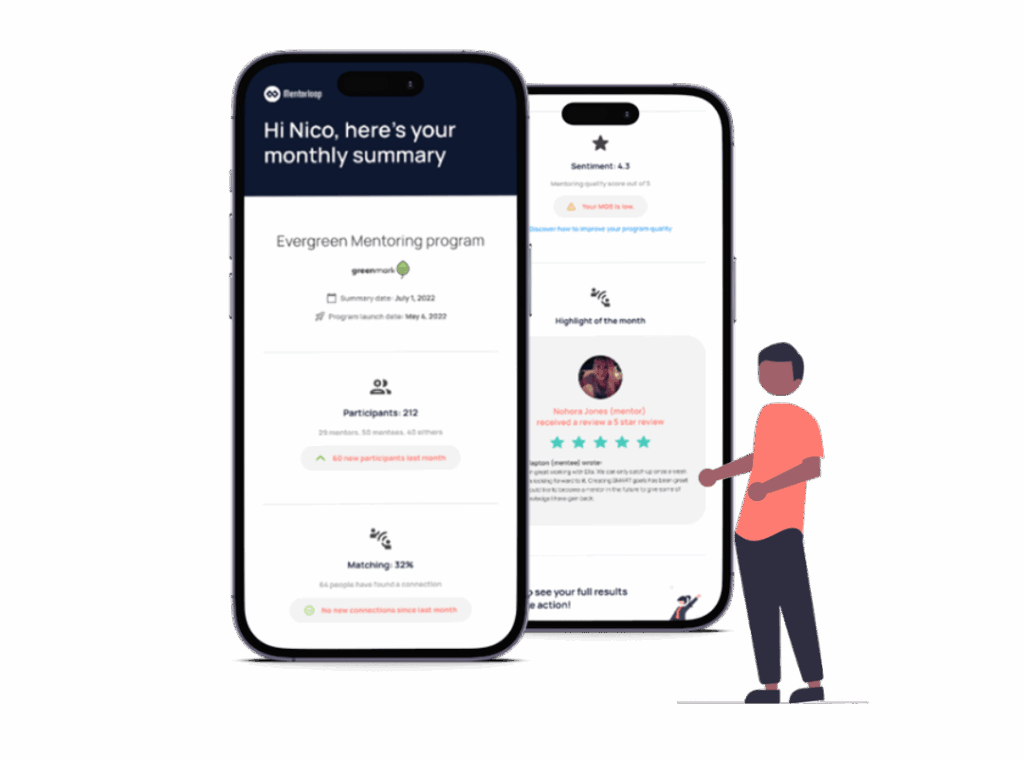

Monthly Digests

We send you a monthly email that gives you a snapshot of the previous month of your program. It includes data on all of the areas mentioned so you can have a quick look at how your program performed in all fronts in the previous month.

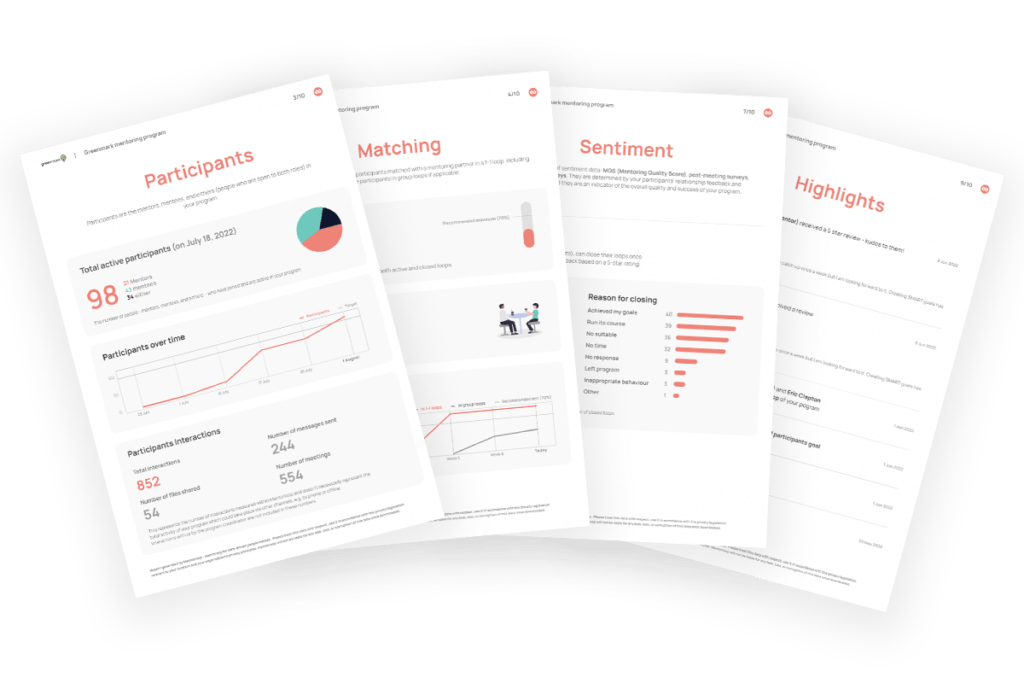

Downloadable Reports

Need a more in-depth report on your program for leadership and other stakeholders? With Mentorloop, you can download a PDF version of your report at any time for any purpose.

Final Thoughts

Measuring a mentoring program effectively involves understanding its importance, knowing what key metrics to measure, utilizing your mentoring software’s capabilities, and reporting on your success. And if you are looking for mentoring software that will not only walk you through how to measure your mentoring program but actively help you do it, book a demo with us and get started building an engaging, scalable, and measurable mentoring program. Or get started today for free, no credit card required!

![[Webinar Kit] Prove It: How to Measure and Report Mentoring Success Explore the common measuring and reporting challenges, what ROI really is for your mentoring program, and how to measure and report what matters with confidence.](https://no-cache.hubspot.com/cta/default/4058869/interactive-195745935504.png)